Snowflake is probably going to be in the top 5 of any “10 most popular” list of data warehouses you see. Not surprising considering how flexible and easy to use Snowflake’s SaaS is compared to other Cloud or on-premise Data Warehouse solutions. It is also easily accessible thanks to its partnership with the cloud leaders, AWS, Azure, and GCP. Snowflake’s pricing is based on the volume of data you store and the compute time you use – and you may see unexpected spending if you fail to follow best practices. To prevent those nasty surprises, this article examines how you can manage Snowflake costs.

This is part of a series of articles about Snowflake Pricing

Related content:

-

Read our guide to Snowflake Cost Optimization

Why Do Costs Spiral Out Of Control?

Firstly, why is Snowflake's service a “handle with care” prospect? The reason is the sheer volume and scale of data and its processing. Not only does Snowflake store data, but it also provides a platform that supports business intelligence, data analysis, reporting, and more. If you collate a massive data lake, then the processing power required to analyze that can be significant.

Various components are taken into account to determine Snowflake’s pricing:

- Buying Options: On-Demand vs. Pre-Paid

- Virtual Warehouse Size / Compute Resources

- Cloud Services

- Data Storage

- Data Transfer

One of the key areas of control you have over billing outcomes is whether you select on-demand vs. provisioned modes; let’s examine this further.

Snowflake On-Demand

If you prefer the pay-as-you-use approach, Snowflake’s on-demand mode may be the best fit. This means that Snowflake scales as your workloads change, and you are charged for its usage on a per-second basis (with a 1-minute minimum charge).

The on-demand capacity mode makes sense for data lakes with a low compute load, unpredictable traffic, or unknown workloads. The on-demand model is effective as, after an idle time of around 5 minutes, Snowflake will auto-suspend your warehouse and, when you execute a new query, resumes automatically.

Pre-Paid Snowflake

If you have predictable data traffic and accurate forecasting over user demand, the provisioned capacity mode may be a better fit than on-demand. Provisioned capacity mode means that you specify your usage requirements.

For more information on pricing in Snowflake, you can sign up to view the Pricing Guide. There are also several third-party tools out there that will give you reasonable ballpark estimates.

Optimize and Control Snowflake Costs

The virtual warehouses and compute clusters are really important components of Snowflake to target when optimizing costs. You need to run compute clusters or virtual warehouses to be able to execute SQL statements or load and unload data from tables.

Because the costs you generate when using Snowflake are correlated to your usage, this means that optimizing usage can save your budget. You can’t manage what you can’t measure, and so, optimizing usage is driven by tracking:

- Analyze your usage data to identify expensive workflows

- Leverage the account usage views to track history, performance, and cost

- Formulate roles by business functions to track department spending, i.e., perform IT showback

Depending on your business’ function, you may be in a position to undertake various optimizations in response to the data you collect. You might be able to right-size to reduce Snowflake costs. Perhaps you don’t need all your data warehouses to function 24/7. In this case, you can configure resource monitors to shut down data warehouses when they reach predefined credit usage thresholds.

Next, let’s consider the best practice approach to optimizing Snowflake usage and, therefore, spending.

Virtual Warehouses

Virtual Warehouses are the backbone of compute in Snowflake—and one of the biggest levers you have for cost control. Since Snowflake charges per-second usage based on warehouse size, right-sizing and auto-suspend configurations are critical.

Avoid leaving warehouses running idle by setting auto-suspend and auto-resume parameters. Use smaller warehouse sizes for lightweight queries and scale up only when concurrency or performance demands it.

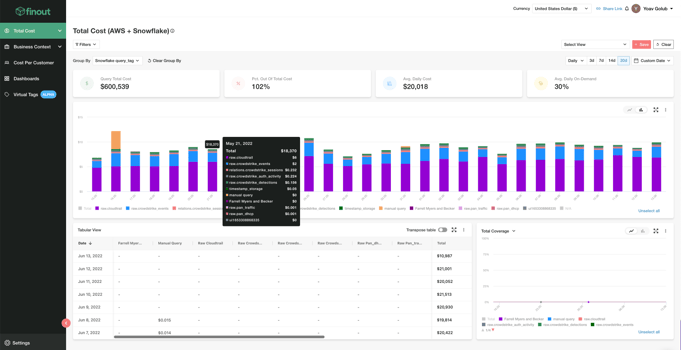

Finout helps you track warehouse usage patterns at a granular level, so you can identify underutilized resources, avoid waste, and allocate compute spend more accurately.

Scale Appropriately

It is important to scale up or out appropriately. For example, if workloads are backing up in a queue, then consider adding more compute clusters. If, however, workloads slow during execution, then it may be necessary to increase your data warehouse size.

Set Query Control Parameters

Both query processing and concurrency can be controlled by configuring the following parameters:

- Max_concurrency_level

This parameter determines the level of SQL statement (i.e., queries and DML) concurrency, which are executed by Snowflake, and, when the level is reached, the operation performed depends on whether the warehouse is a single-cluster or multi-cluster warehouse.

This parameter does not limit the number of statements that can be executed concurrently by a warehouse cluster. When each statement is sent to the warehouse, Snowflake allocates some resources for executing the statement, and if there is not enough resource, the statement is queued, or with a multi-cluster setup, additional clusters are started.

- Statement queued timeout in seconds

When the warehouse is already under load, i.e., busy, and executing another query would lead to performance degradation, the query is queued and waits until the necessary resources become available. Certain parameters configure that queue timeout for the SQL query. e,g;

- A warehouse has a queued timeout of 120 seconds.

- The queued timeout for the session is set to 60 seconds.

The session timeout takes precedence.

- Statement timeout in seconds

This parameter controls the amount of time, in seconds, after which a running SQL statement (query, DDL, DML, etc.) is canceled by the system. For more details on these parameters, see the official Snowflake documents.

Optimize Data Loading

How you load data and how efficient your queries are, represent the more important Snowflake cost control factors.

By default, data is automatically partitioned in Snowflake according to its ingestion order. It can, however, be more efficient to sort the data in its cloud bucket before loading rather than sorting the data with an `ORDER BY` after loading.

Compression also helps to improve load times, and not all options are made equal. A CSV file that has been Gzipped loads 2–3 times faster than Parquet or ORC.

Even the move command matters: COPY INTO is more efficient than INSERT because it applies an optimized SQL bulk-loading process.

If you can apply multi-threaded parallel loading, this can speed up the loading process and reduce that per-second count.

Optimize Your Scripts

It is always worth investing in code reviews because poorly optimized code can slow down any database or data warehouse. It is a better ROI to run code optimizations on your SQL than to pay for more compute power. SQL code and processes optimize well, ensure all your devs are working to the same standards:

- Delete all temporary and transient tables when done

- Use CREATE or REPLACE not CREATE TABLE AS

- Apply COPY INTO, not INSERT INTO

- Leverage staging tables to transform imported data

- Remember those ANSI Joins are more efficient

- Remember to sort rather than ORDER BY where possible

- Don’t tolerate redundancy; use DISTINCT or GROUP BY

Optimize Your Storage Practices

Data storage costs can add up fast. To save your budget, make sure you:

- Use S3 buckets in the same region as your data warehouse

- Match your bucket specifications with your data transfer expectations, e.g., are they organized by date or by application?

- Leverage parallel loading by restricting file size to 60–100 MB

- Avoid using materialized views unless required (e.g., pre-aggregating)

Snowflake Cost Observability

The experience gained through managing a traditional SQL database translates well when moving to Snowflake. However, there are considerations that are specific to the cloud environment.

It is important to be able to predict how a configuration change will impact the price of your unit cost. Finout recommends adopting a FinOps approach to cost control to enhance visibility over the components of your data warehouse that have budget implications. Effective FinOps means that teams must be consuming meaningful data, and integrating a cloud observability platform allows FinOps to drive this strategy.

Using a cloud cost observability platform like Finout will show you, at a granular level, where your investment is spent on Snowflake and enable your developers to forecast how decisions will impact your cost optimization efforts. Contact Finout today to learn how our platform can inform your Snowflake spending.

One platform. Every team. Complete control.

Built for the complexity, speed, and ownership demands of modern cloud and AI environments