What is Kubernetes?

Kubernetes is a lightweight and open-source system used to manage and orchestrate containers in a microservices architecture — and it is massively popular, especially in the cloud. However, by design, it is highly customizable, which means that it needs a well-defined vision to be implemented successfully.

One of the challenges companies face when implementing K8s is managing Kubernetes according to best practices. One of these best-practice cost control solutions is to apply spot nodes, and this article will introduce how to implement spot nodes and empower your team to save your budget.

What are Spot Nodes?

Of the many benefits that arise from using the cloud, cost savings is one of the important.

Spot nodes are one of these cost-saving strategies that may be applied when creating Kubernetes clusters. Spot Instances enable cloud providers to sell extra capacity; this means that their availability is closely coupled with demand.

While there are different names used for spot nodes according to your provider: Spot Instances for AWS, Spot VMs for GCP and Azure, the functionality offered by “spot usage” are the same. Importantly, only workloads that are fault-tolerant and can withstand the loss of the assigned VM will suit.

Implementation is worth the effort because Spot VMs can significantly reduce your costs. The instances are typically acquired through a bidding process in which the customer specifies a price per hour they are willing to pay. As per this AWS explainer:

“When an EC2 instance becomes available at that price, the customer's instance will run. The instance will be cut off when the Spot price increases and exceeds the customer's bid. As long as the customer doesn't cancel the bid, the instance will be reactivated when the price falls again. Instances may also be terminated when the customer's bid price equals the market price. This can happen when demand for capacity rises or when supply fluctuates.”

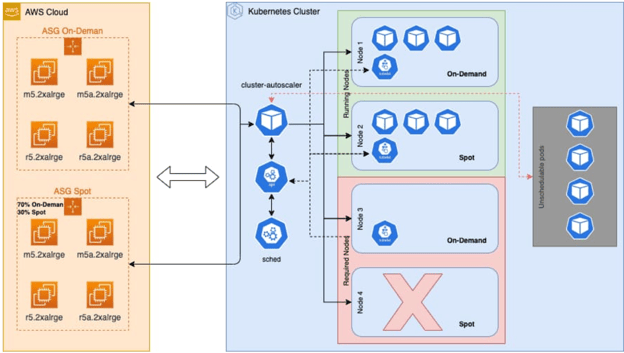

Figure 1 Simple Architecture of Kubernetes with Spot Instances

When Should You Use Spot Nodes?

Spot nodes can assist in situations in which you apply vertical and horizontal scaling, thanks to the flexible pricing. And that discount can range from 50–90% on AWS, 60-91% discount on GCP, and discounts of up to 90 % on Azure.

The scaling for spot and on-demand nodes are exclusive of each other. When we launch applications, the executor and driver pods can schedule in different groups according to the resource requirements. This adds resilience and cost optimization to the system by reducing job failure due to a lack of resources.

When Shouldn’t You Use Spot Nodes?

There are some risks when using spot instances. If you use stateful applications like elasticsearch, databases, caching, etc., then there is a possibility of losing data. Take note of the following spot readiness checklist; stick to these guidelines, and you can be sure that spot is a good fit.

Spot Readiness Checklist

So, as mentioned above, spot nodes are risky for temporary and stateful usage, so it is vital to only use spot nodes according to best practices for Kubernetes.

Deployments not Statefulsets

Kubernetes has a controller component that manages deployments, statefulsets, etc. Because deployments are stateless, it makes sense to implement spot nodes with deployments.

However, statefulsets have stateful workloads — meaning that it is not best practice to use spot nodes in combination with statefulsets. When a spot instance disappears, that state-dependent data will be lost. It is possible to store temporary volumes while using spot nodes to avoid losing data, but some workloads are not recoverable from their mid-way data and would have to be re-run.

1. Don’t Let Your Replicas == 1!

Replica count is the other important consideration if you apply spot nodes. It is dangerous to both set the replica count to 1 when managing workloads and pushing that workload to a spot instance. Imagine you experience a spot outage – the entire workload will go down. To avoid that outage, the replica count has to be set to >1.

2. Consider your Deployment Strategy

The Deployment Strategy is critical if you intend to use Kubernetes and spot nodes. There are several strategies to deploy your applications.

A rolling update may be deployed by updating the image of your pods via kubectl’s set image. To refine your deployment strategy, simply adjust the spec:strategy parameters in the manifest file. This automatically triggers the update. There are two optional parameters for granular control: maxSurge and maxUnavailable.

- MaxSurge limits the maximum number of pods that the deployment creates. Either specify this as a whole number, e.g., 10, or as a percentage of the total required number of pods, e.g., 20% (always rounded to a whole number). If you don’t set MaxSurge, it defaults to 25%.

- MaxUnavailable allows you to specify the maximum number of pods that may be unavailable during the rollout. If a deployment has a rolling update atrategy configured (calculated from max unavailable in rounded-down integer form and replica count), the threshold best practice usage is 0.9 instead of 0.5.

At least one of these parameters must be larger than zero. By changing the values of these parameters, you can define other deployment strategies, as shown in Table 1.

Table 1: Best-Practice Spot Deployment

|

Spot Nodes Usage |

Recommended |

Not Recommended |

|

Controller Type |

Deployments |

Statefulset |

|

Replica Count |

Replica Count > 1 |

1 |

|

Rolling Update |

0.9 |

0.5 |

|

Volumes |

Temporary |

Permanent |

Spot Instances/VMs Best Practices

There are several best practice strategies to apply when using spot nodes:

- Use capacity-optimized allocation strategy

The “Capacity Optimized” allocation strategy automatically makes the most efficient use of available spare capacity while still taking advantage of the significant discounts offered by Spot Instances. One of the best practices for using Spot Instances effectively is to be flexible across a wide range of instance types.

- Be flexible about instance types

By being flexible about which instance types you request. A mixed instances policy gives spot more options when searching across pools. Each spot instance pool is a set of unused instances with the same instance type (for example, m5.large).

- Be flexible about zones

Be flexible about which Availability Zones (e.g., us-east-1a), your workload may be deployed in. This provides spot with opportunities to find and allocate your required compute capacity.

- Use proactive capacity rebalancing

Capacity rebalancing complements the capacity-optimized allocation strategy, that enhance availability by deploying across multiple instance types running in multiple Availability Zones. Capacity rebalancing increases the emphasis on availability by automatically attempting to replace spot instances in an auto-scaling group before they are interrupted.

Capacity rebalancing responds to a signal that is sent when a spot instance is at imminent risk of interruption. This rebalance recommendation signal should arrive before the existing two-minute spot instance interruption notice, providing an opportunity to proactively rebalance a workload to new or existing spot instances that are not at an elevated risk of interruption.

Conclusion

Spot nodes can be an excellent cost-saving solution when combined with Kubernetes workloads. You should be well placed now to experience the benefits of optimizing the compute cost by using spot nodes with Kubernetes without compromising on availability.

And remember, we are not impartial observers of the world of cost optimization with Kubernetes here at Finout! Finout's Kubernetes Cost monitoring tools empower you to precisely allocate your Kubernetes costs to your business unit. Have you taken it for a test drive yet? Get early access to Finout today.

One platform. Every team. Complete control.

Built for the complexity, speed, and ownership demands of modern cloud and AI environments