What’s Missing in CUR: The Cost of Kubernetes Clusters

Containers and microservices are the cloud-native way of developing modern applications. In order to deploy and manage containers, Kubernetes has become the de-facto container orchestration in recent years. It creates a strong layer of abstraction to separate applications from infrastructure and let you focus on working on your application.

All leading public cloud providers, such as AWS, offer Kubernetes-managed services to create clusters and deploy applications. Amazon Elastic Kubernetes Service (Amazon EKS) has been generally available since June 2018, allowing you to create Kubernetes clusters in AWS with a couple of clicks. Nowadays, creating Kubernetes clusters and deploying applications into them is pretty easy.

Like with all cloud providers, you will receive a monthly bill from AWS for all the resources you have used with your Kubernetes clusters. However, the challenge is calculating the actual costs of applications running inside them. In this blog post, we will discuss AWS Cost and Usage Report (CUR) and merging Kubernetes applications and cost data.

Deep Dive: AWS Cost and Usage Report

Cloud providers, including AWS, are based on a pay-as-you-go approach. This means that you create cloud services, such as servers, storage disks, or load balancers, and pay at the end of the month for usage of those services. Cloud providers send you detailed monthly bills that break down each product and its usage. CUR is the most comprehensive report AWS provides for monthly bills.

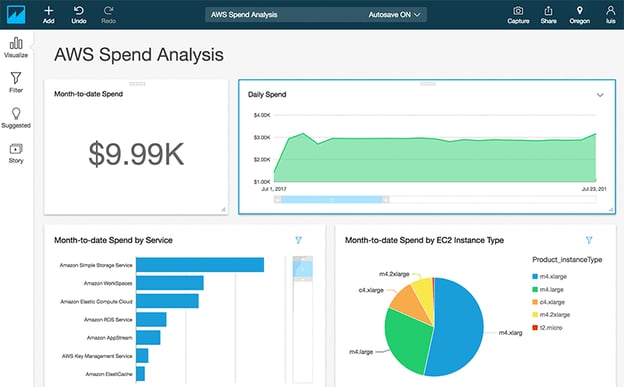

CUR contains line items for each AWS account, AWS product, usage type, and operation. The line items can be aggregated daily or hourly, and you can store the reports in S3 storage or databases and visualize them. For instance, you can query and visualize CUR with Amazon QuickSight, as shown in Figure 1:

Figure 1: CUR - Visualization (Source: AWS Blog)

With the data provided in CUR, you can see the service, instance types, and daily spend amounts, as well as when and how much you have used services like virtual machines, storage, and networking. On the other hand, if you run your applications on Kubernetes, you will be abstracted away from the infrastructure. You will have namespaces, Kubernetes nodes, deployments, and so on, so you won’t see the cost of your deployments or pods in CUR.

Challenges of Kubernetes Cost Observability

There are three main groups of challenges you could face when creating Kubernetes cost observability:

- Flexible Infrastructure: Kubernetes resources are flexible and scalable, so resource usage is highly volatile. It is challenging to track the actual usage levels of infrastructure products and distribute overhead expenses.

- Kubernetes API (and abstraction): Kubernetes API is an abstraction layer that makes it easier to help developers create cloud-native applications. When you create and deploy applications to Kubernetes under the hood, Kubernetes creates pods, runs containers on servers, and configures load balancers on the cloud provider. You need a solution to track the costs of the infrastructure resources that are hidden by the Kubernetes API and its abstraction.

- Rightsizing and savings: Kubernetes offers out-of-the-box resource management by setting resource requests and limits, such as CPU and memory. Therefore, it is challenging to request resources based on actual usage in order to minimize waste. With the scalability and volatility of Kubernetes, you need to have an automated approach for such calculations.

What CUR Is Missing

Although CUR gives you detailed information about your monthly bills, it is missing several essential Kubernetes-related features:

- Kubernetes nodes: Kubernetes distributes the workload to nodes that are VM instances in Amazon EC2. It is possible to see the costs of EC2 instances in CUR with all machine tags, but what you actually want is to be able to track the price of the nodes to the Kubernetes abstraction. Furthermore, you want to be able to group Kubernetes nodes based on your teams or projects and schedule your workload accordingly. This will enable you to allocate the costs of EC2 instances to the correct teams or projects.

- Kubernetes namespaces: Namespaces are logical groupings of resources in Kubernetes. They are one of the building blocks of the system, which makes it multi-tenant and scalable. You can provide namespaces for teams, applications, and environments—such as staging, testing, and prod. However, these groupings are not reflected in CUR, and it is impossible to aggregate over the deployments, storage, or any other resources defined in your Kubernetes namespaces.

- Kubernetes networking: Kubernetes provides out-of-the-box networking capabilities for in-cluster and external access, such as ingresses and load balancers. You will see networking costs in CUR, but not the connection to your Kubernetes networking resources.

Finout: The Cost Observability Platform

Kubernetes offers out-of-the-box features to run scalable and reliable applications in the cloud. Unfortunately, it does not offer any cost management tooling or methods. Similarly, CUR does not help with Kubernetes cost observability. To track the cost of applications running in Kubernetes clusters that are living on AWS, you need a Kubernetes-native solution to make sense of CUR, which is quite complex.

Finout is a new and groundbreaking tool designed with the FinOps mindset. It works with Kubernetes and CUR to fill in the missing features we mentioned in this post, offering intuitive and accurate reports that are helpful for your business planning. With Finout, you can see cost per pod, deployment, or namespace, leading to complete Kubernetes cost observability. It’s a self-service, no-code platform that treats your costs as a priority metric, helping you attribute each dollar of your cloud bill to its proper place.

.jpeg?width=1200&height=688&name=Gemini_Generated_Image_9ninwz9ninwz9nin%20(1).jpeg)

One platform. Every team. Complete control.

Built for the complexity, speed, and ownership demands of modern cloud and AI environments